DoubleCloud’s 13th Product Update

Hey everyone! Victor here with the latest news.

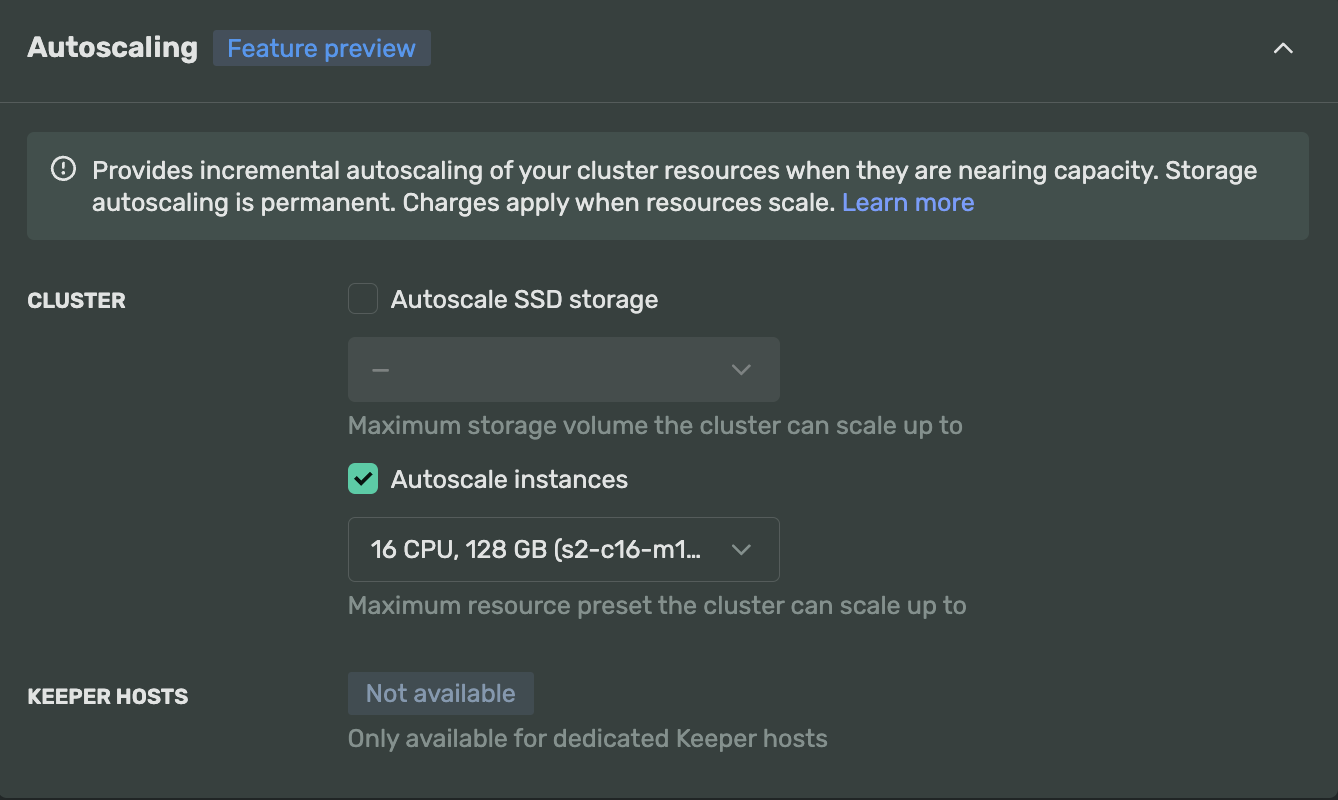

ClickHouse and Apache Kafka compute vertical autoscaling

Finally, the long-awaited autoscaling for compute has arrived. We are introducing compute vertical autoscaling in preview for all users of our Managed ClickHouse and Managed Apache Kafka services.

With disk autoresizing and compute autoscaling combined, you can now stop worrying about your service being under-provisioned during spikes in load or high-traffic days and hours. Once enabled, we will automatically monitor the CPU load on instances and scale it up during sustained high loads on a cluster over the last 5 minutes. When the load decreases, it will automatically scale back down. This feature will help maintain the necessary size of the cluster and avoid paying for over-provisioned resources when they are not needed.

You can explicitly enable this feature while creating a cluster or modifying an existing one. You can find more details about this new functionality in the docs.

In this article, we’ll talk about:

- ClickHouse and Apache Kafka compute vertical autoscaling

- Airflow custom container images

- Permissions and users in ClickHouse

- Organization member groups

- Transfer service progressive discounts

- BigQuery as target connector

- New ISO 27001:2022 certification

- Visualization service UI/UX update

- Quality of life improvements

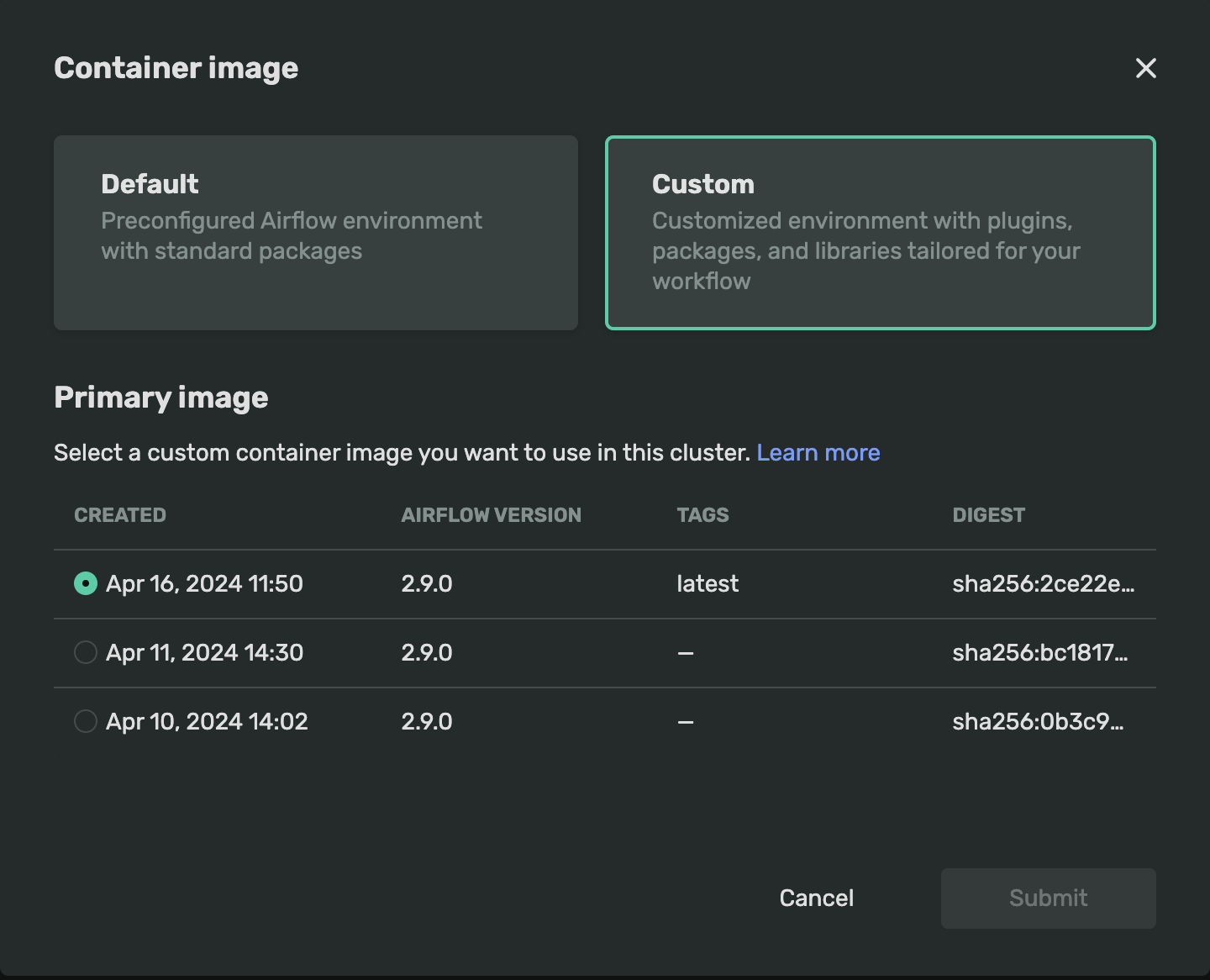

Airflow custom container images

You can now customize container images used by Airflow workers. Airflow is employed in various scenarios, such as orchestrating data pipelines or machine learning pipelines. In some cases, you may need to perform calculations or use Airflow workers for data transformations, among other tasks. These scenarios often require customizing your workers' runtime environment to include extra packages like scikit-learn, pandas, or specific SDKs/drivers for connecting to external services or systems.

Now, you can create a custom container image based on the default Docker image, push it to our registry, and use it as the runtime for your workloads.

For more details on how to set up custom images, please refer to our documentation.

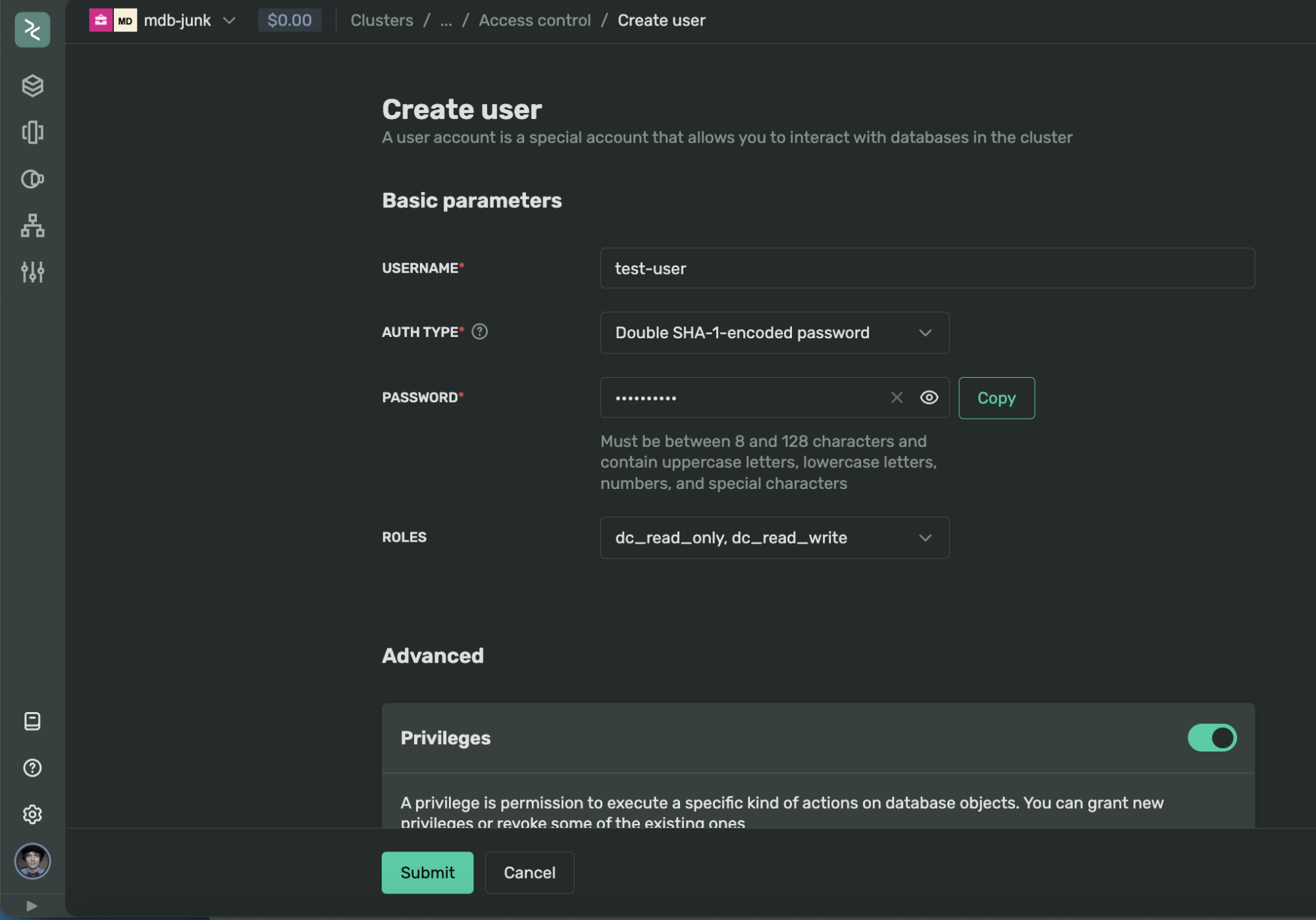

Permissions and users in ClickHouse

Managing users and privileges in ClickHouse is now possible through our console UI, API, and soon via Terraform. You can effortlessly create and manage users and their permissions directly in the UI without connecting to ClickHouse instances through a terminal. Additionally, you can modify user settings, establish new roles with various privileges, and perform other related tasks through our new UI. This feature is accessible from the cluster page under 'Access Control'.

For more information, please check out our step-by-step instructions.

Managed Service for ClickHouse

Fully managed service from the creators of the world’s 1st managed ClickHouse. Backups, 24/7 monitoring, auto-scaling, and updates.

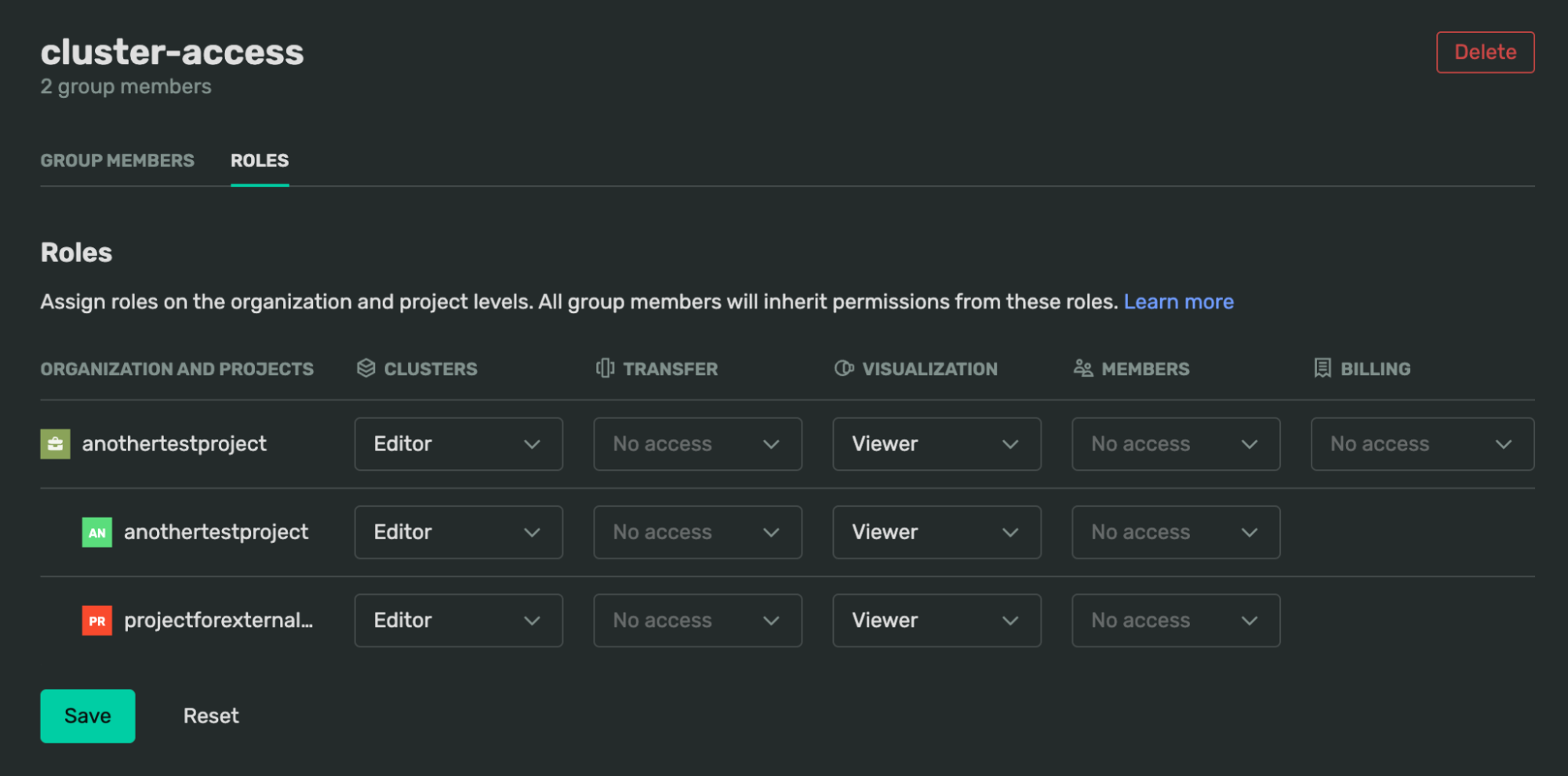

Organization member groups

To simplify the management of many users and their permissions, we have introduced IAM Groups in the organizational view.

You can now create a new group, add current members, and assign specific permissions with project-level granularity. For example, the accompanying image illustrates how you can easily create a user group that can manage clusters in a particular project but will only have viewer permissions for the Visualization service.

For more details on managing groups in our console, please see here.

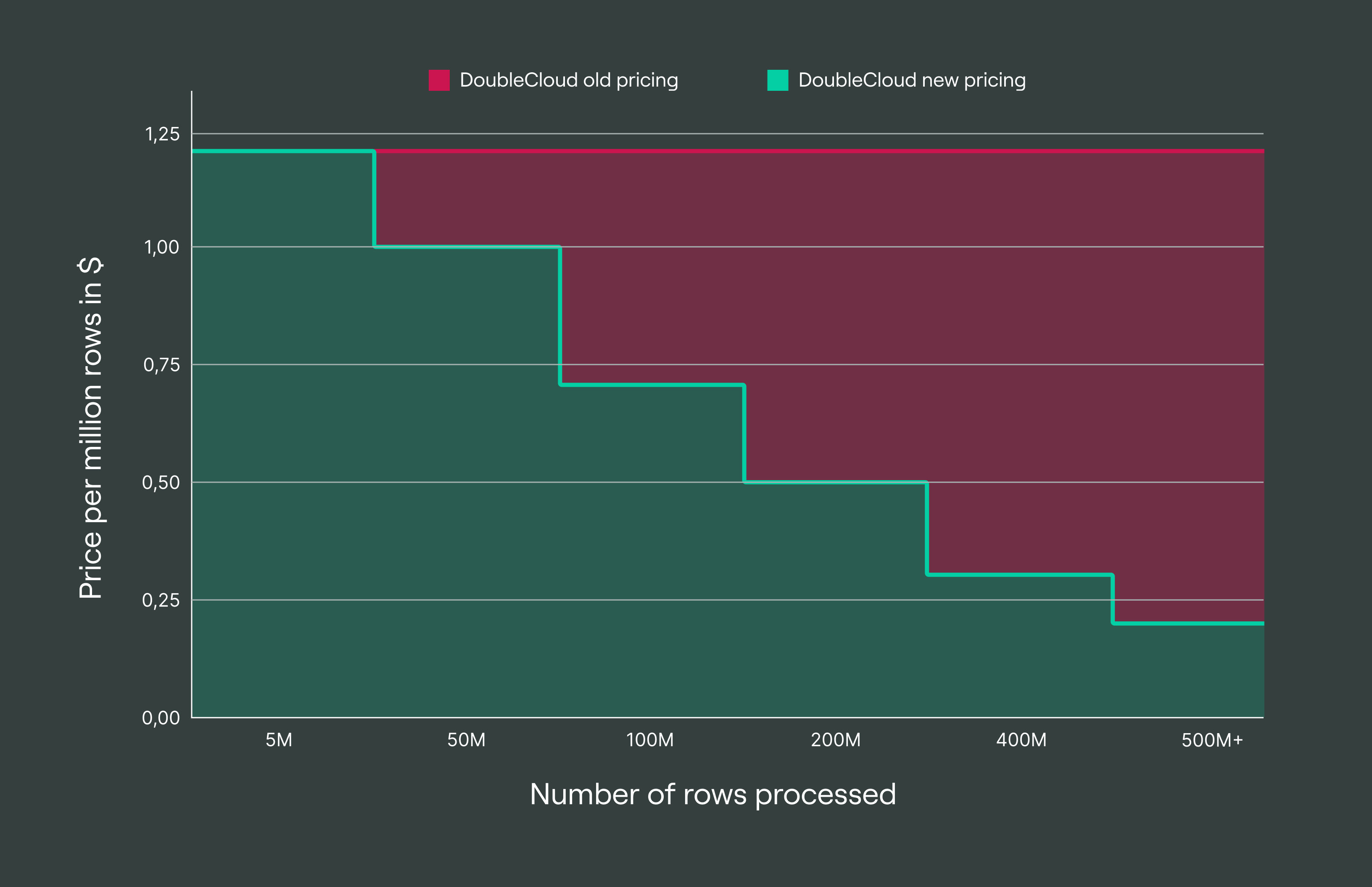

Transfer service progressive discounts

One of the primary uses of the DoubleCloud Transfer service is the synchronization of data from PostgreSQL to ClickHouse or from Apache Kafka to ClickHouse. In these cases, users typically stream large volumes of data at high speeds, ranging from 1 to 10 megabytes per second, which incurred high costs previously.

We have decided to revise our pricing structure and introduce dynamic pricing discounts to align with this usage pattern. Now, the more data you process, the less you pay. Starting at $1.20 per 1 million processed rows, the price gradually decreases to $0.20 after the number of rows exceeds 400M.

Take a look at our new pricing structure:

No-code ELT tool: Data Transfer

A cloud agnostic service for aggregating, collecting, and migrating data from various sources.

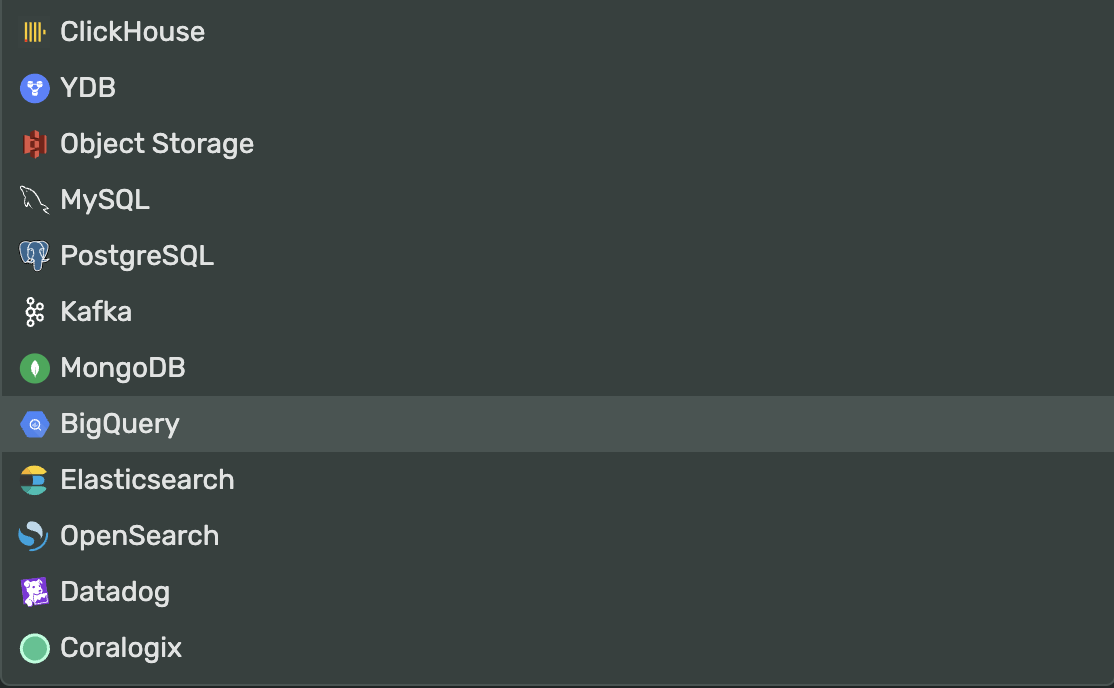

BigQuery as target connector

In addition to our 21 source and 12 target connectors, we have launched a new one: BigQuery. This connector can export data from the ClickHouse or Apache Kafka service to BigQuery. Please find more details here.

New ISO 27001:2022 certification

We at DoubleCloud take threats to the availability, integrity, and confidentiality of our clients’ information seriously. As such, DoubleCloud is an ISO/IEC 27001:2022 certified provider whose Information Security Management System (ISMS) has received third-party accreditation from the International Organization for Standardization (ISO).

This certification demonstrates DoubleCloud’s continued commitment to information security at every level. It ensures that the security of your data and information has been addressed, implemented, and properly controlled in all areas of our organization.

To view all our certifications or to request assessment access, please visit our Trust Center.

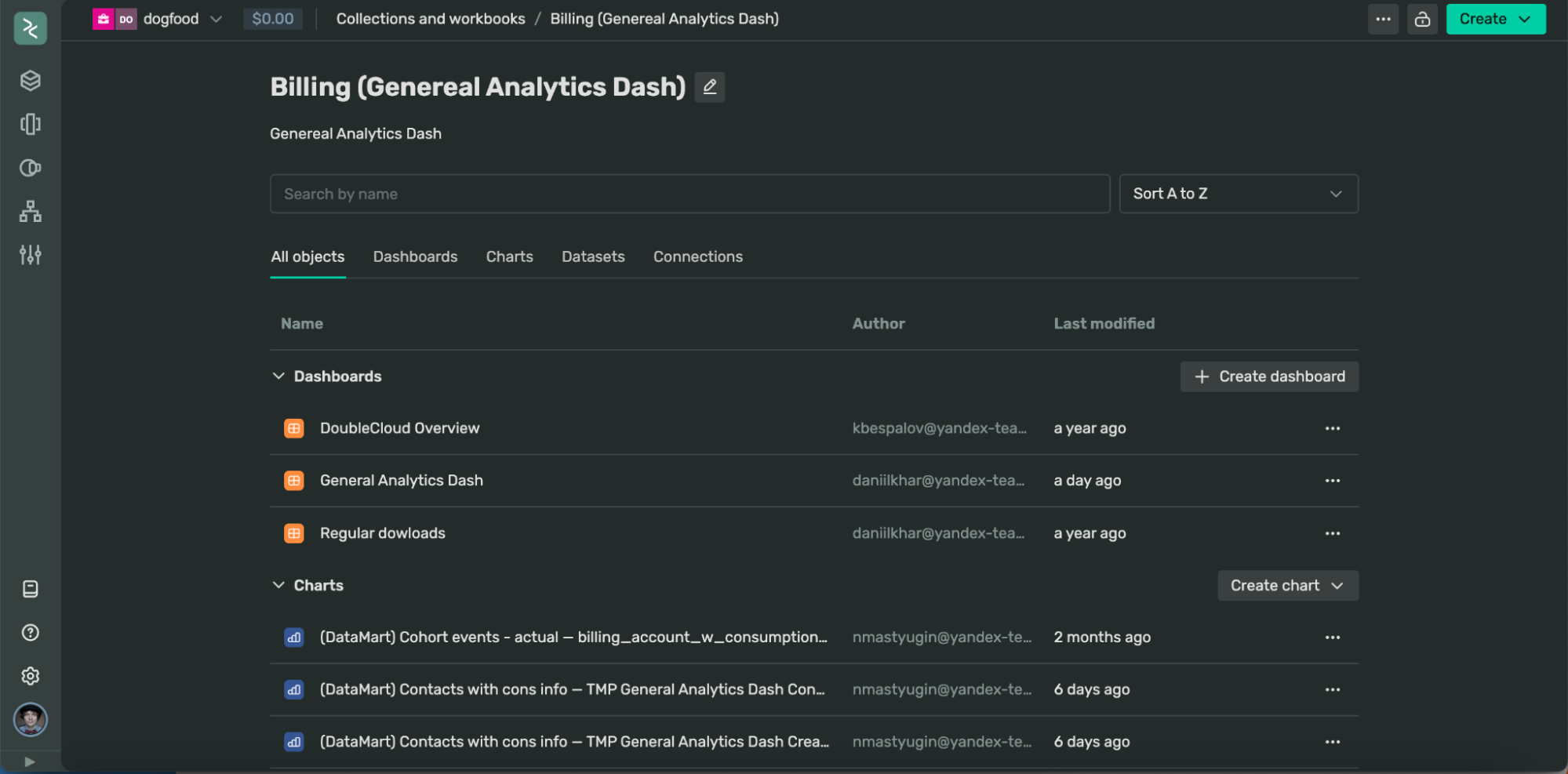

Visualization service UI/UX update

We recently updated our DoubleCloud Visualization service by incorporating many features from the open-source version released over the last few months. Below is subset of things that were changed:

-

Workbook page redesign; now you can see all related objects in one place with quick actions

-

Added export to Excel

-

New datepicker with relative dates for parameters in QL charts

-

Improved creating dashboard entry: now there’s no need to fill the name first

-

Collections & workbooks sorting fix

-

Added required selector option

-

Support for the inner label in datepicker control

The complete list of changes is available here, covering the period from December 21, 2023, to March 16, 2024.

Quality of life improvements

Here’s a list of some minor but potentially pivotal improvements that simplify workflows and day-to-day tasks:

-

We’ve added Apache Kafka consumer lag metrics to the Prometheus export endpoint.

-

Recently, we encountered an incident where a bug in ClickHouse related to updating TLS certificates was identified as the root cause. We have resolved this issue in the mainstream ClickHouse repository, and the fix has been merged.

-

We’ve updated the UI controls across our console for easier usage. Reading and understanding forms and subforms is now more straightforward, especially in complex areas like ClickHouse cluster settings.

-

We’ve achieved a faster creation process for ClickHouse and Apache Kafka clusters. We managed to reduce the setup time by 2 minutes, bringing the average creation time down to around 5 minutes.

-

New ClickHouse Long-Term Support (LTS) versions 24.3 and 24.2 have been added, along with Apache Kafka version 3.5.2. While these versions are marked as LTS, we still recommend waiting until they’re stabilized.

-

Check out the new DoubleCloud Visualization Embedded showcase page, where you can view examples of embedded charts and learn how to integrate similar visuals into your web application.

-

We have added Python examples of managing Topics, Users, and ACLs in DoubleCloud Managed Service for Apache Kafka.

Get started with DoubleCloud